A Lesson from EXXXOTICA Chicago 2026: When the Algorithm Decides Who You Are

A Lesson from EXXXOTICA Chicago 2026: When the Algorithm Decides Who You Are

As part of our continued expansion into culture, media, and systems-level analysis, we attended EXXXOTICA Chicago 2026 as credentialed media. What began as a simple exploratory assignment, observe, document, and our review of an industry adjacent to but distinct from our usual coverage in cannabis, hospitality, alcohol, and regulatory environments quickly evolved into something more revealing. The experience itself was instructive. The people, the professionalism, and the operational discipline required to maintain visibility in a heavily scrutinized and often stigmatized sector became immediately apparent. For those unfamiliar with the adult industry or curious about its inner workings, our review stands on its own as a recommendation: this is an ecosystem far more structured, competitive, and demanding than surface-level assumptions would suggest.

At the human level, the transition into this space required recalibration. Mike, stepping into an unfamiliar interview environment, initially struggled to find his footing. The norms, the tone, and the boundaries differ significantly from more traditional conventions. It was within this moment of hesitation that informal mentorship emerged. Chloe Temple, in a brief but meaningful interaction, offered both encouragement and a practical reset, one that included a literal shot of tequila and a figurative push forward. That moment marked a shift. The first interview followed, then another, and then momentum took over.

Shortly after, we encountered Ellie Stockholm, who became the first true cold approach interview of the event. What is notable here is not simply the interview itself, but the collaborative refinement that occurred in real time. She assisted in sharpening the question structure, adjusting framing, and effectively calibrating our approach to the environment. This is not insignificant. In industries where visibility is currency and audience retention determines viability, communication is not casual, it is engineered, practiced, and continuously optimized.

What followed was an unexpected extension of access. At the conclusion of that interaction, the conversation shifted from on-the-floor coverage to a formal podcast interview opportunity. This was not part of the original plan, nor was it anticipated. Yet it materialized organically, reinforcing a recurring principle: access is often granted not through scale, but through presence, adaptability, and timing. The interview itself provided deeper insight into the operational realities of the industry, particularly the sustained effort required to build, maintain, and protect a digital platform under constant scrutiny.

This is where the analysis moves beyond anecdote and into structure.

Over the past year, F’nAround Media has reached approximately 5.2 million viewers across platforms. Within our operational framework, we have tracked performance variability across distribution channels, noting inconsistencies that cannot be fully explained by audience behavior alone. Personal accounts with established followings often underperform relative to newly established media pages with minimal subscriber bases. Content parity does not yield distribution parity. This is not speculation; it is observed pattern.

Against this backdrop, a controlled test presented itself. With permission, we posted a professionally appropriate image alongside Ellie Stockholm and tagged her account. There was nothing explicit, nothing suggestive, just three individuals in a standard event photograph. The result, predictably, was increased engagement across platforms. Exposure scales when proximity to larger audiences is introduced. This is not controversial; it is foundational to digital growth.

However, the anomaly emerged on Facebook.

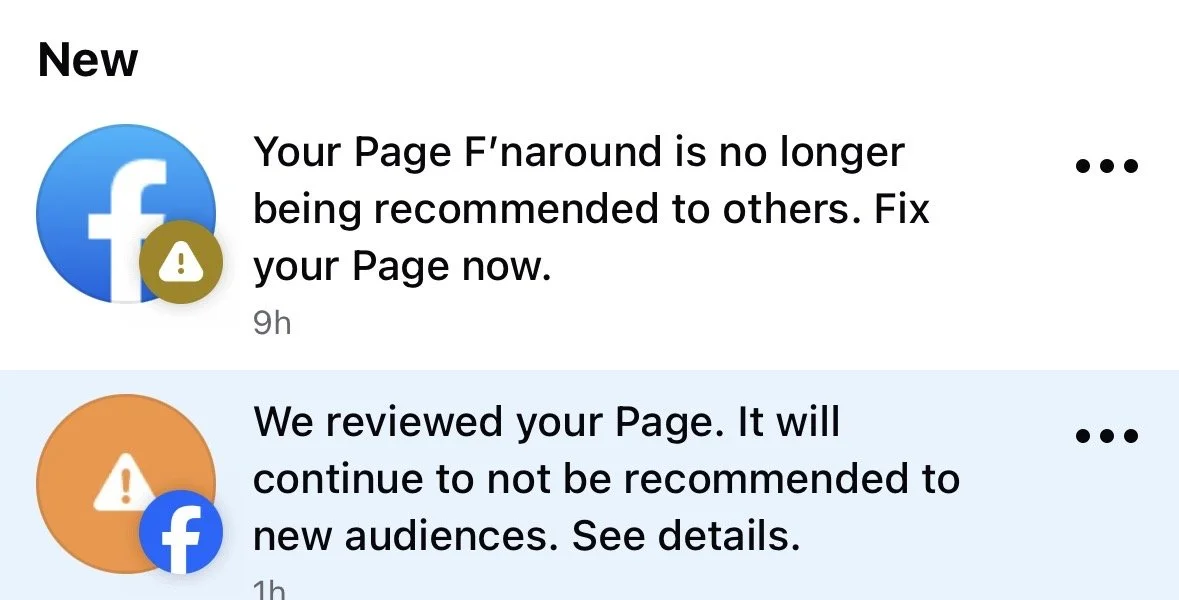

Despite historically minimal usage, the account had recently begun to experience increased visibility through platform-driven recommendations. View counts were rising, impressions expanding, yet follower conversion remained disproportionately low, an indicator of distribution without retention, or more precisely, exposure without permission to anchor that exposure into audience growth. Then, without warning, the account received a restriction notice stating that the page would no longer be recommended.

For a platform (F’nAround) built on documenting regulatory behavior and institutional response patterns, this was not an isolated anomaly, it was a familiar structure appearing in a new environment.

The appeal process provided no substantive clarity. The content in question did not violate explicit guidelines. There was no nudity, no prohibited imagery, no deviation from platform standards. The rejection of the appeal, however, directed attention to a different mechanism, one less visible and more consequential. The restriction was not solely about content. It was about association.

Within the platform’s enforcement logic exists a secondary layer of control: the suppression of distribution based not only on what is posted, but on who is present. If an individual is categorized within a restricted or sensitive domain, their mere inclusion, regardless of context, can trigger algorithmic limitation. In effect, identity becomes content. Presence becomes violation. Compliance, in this model, is no longer sufficient.

This raises a fundamental question that extends beyond a single platform or a single post. When a system is capable of restricting visibility based on association rather than action, what is being regulated is no longer expression, but proximity. The implication is significant. It suggests that individuals are not evaluated on what they say or do in context, but on classifications assigned outside of it.

Over time, these small, unchallenged constraints accumulate, less as isolated moderation decisions and more as structural weight shaping what can and cannot surface.

From a free speech perspective, this represents a shift from moderation to filtration. Traditional content moderation operates on identifiable violations, specific posts, language, or imagery that breach defined standards. Filtration, by contrast, operates preemptively. It limits reach before engagement can occur. It does not remove speech; it prevents it from being heard.

This distinction matters.

Because when visibility can be selectively reduced without overt removal, suppression becomes difficult to detect and even more difficult to challenge. There is no clear infraction to dispute, only an outcome to observe. Distribution declines. Reach contracts. Growth stalls. The system, however, remains technically compliant with its own rules.

For industries already operating at the margins of acceptability, such as adult entertainment, this creates an additional layer of structural difficulty. Growth is not solely dependent on audience interest or content quality, but on navigating an opaque network of classification systems that can limit exposure irrespective of compliance. Every follower, every view, every engagement is not simply earned, it is contested.

The broader implication extends beyond this industry alone. If platforms are willing to restrict fully compliant content based on the perceived classification of individuals involved, then the same mechanisms can, in theory, be applied across any domain. Political discourse, investigative journalism, emerging narratives, anything tied to a flagged identity or topic, can be quietly deprioritized without formal violation.

This is where the conversation shifts from platform policy to systemic influence.

Because if three individuals in standard attire can trigger distribution suppression due to one tagged identity, then the question is no longer whether content is appropriate. The question is who decides which identities are promotable, which associations are permissible, and which narratives are allowed to scale.

And more importantly, who is never seen at all.

For creators within this space, the takeaway is not abstract. It is operational. The scale of their audiences is not simply a function of demand, but of resistance. Every metric achieved represents not just growth, but navigation through a system that can, at any moment, redefine the terms of visibility.

For observers outside of it, the lesson is equally clear. What appears in your feed is not a neutral reflection of reality. It is a curated output shaped by layers of decision-making that extend far beyond content itself.

The algorithm does not just decide what you see.

It decides who is allowed to exist within visibility and who is quietly removed from it.